Better prompts exist. Find them automatically.

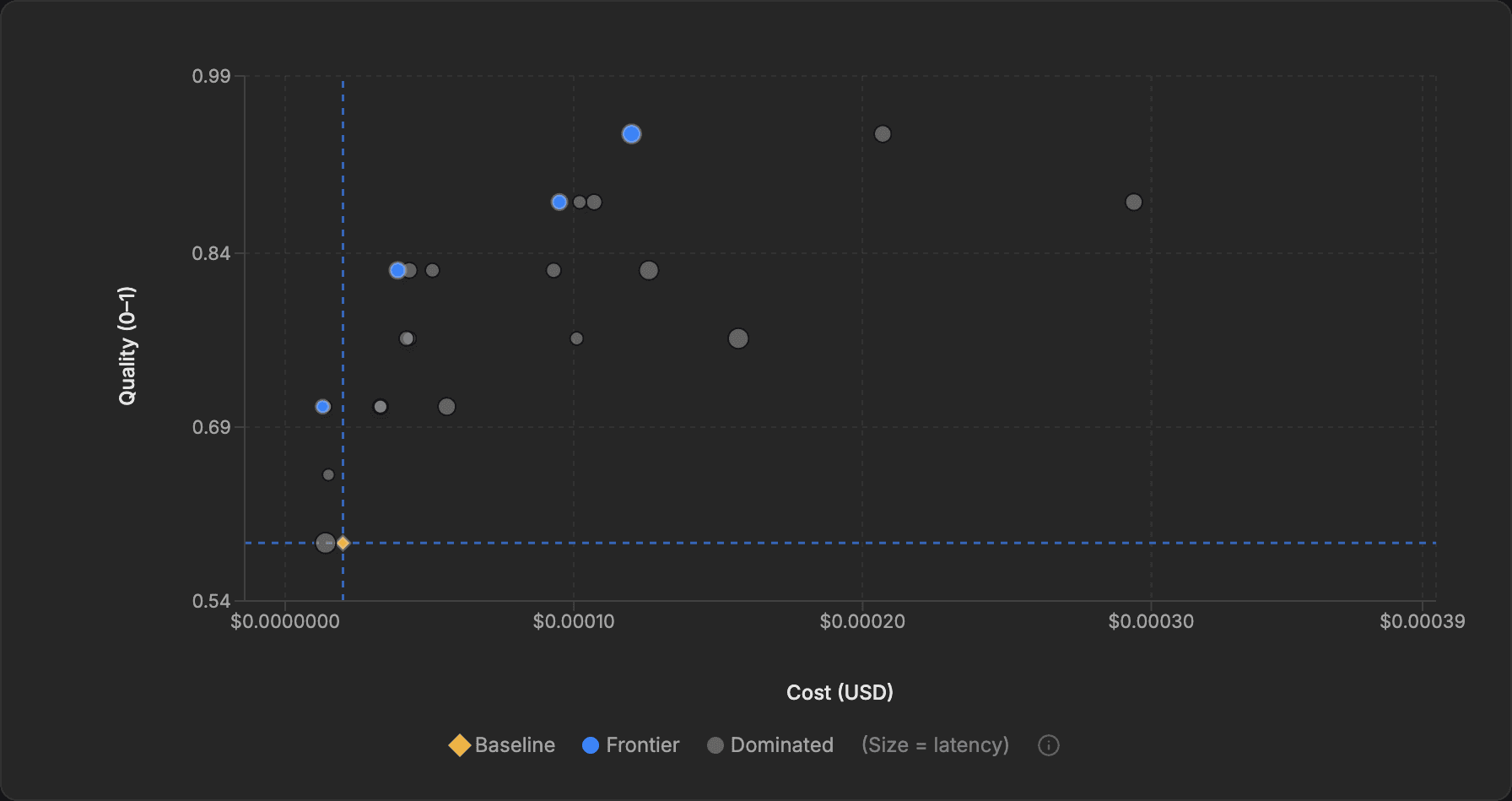

An optimization run exploring cost–quality tradeoffs across prompt candidates, accelerated

Manual Prompt Tuning Does Not Scale.

You are trying to solve a multi-variable optimization problem by hand. The result is bloated costs and fragile performance.

Inflated Operational Costs

Your LLM bill is spiraling, but you're afraid to switch to a cheaper model because you can't guarantee quality won't drop.

Wasted Developer Time

Engineers spend days manually tweaking prompts; time that could be spent building new, value-driving features for your customers.

Unreliable Applications

Inconsistent or hallucinated outputs from your RAG pipeline are eroding user trust and creating significant business risk.

Visualize the Entire Trade-off Frontier

EigenPrompt introduces the Pareto frontier, a live 2D view of your prompt optimization. See the trade-offs between cost and quality, compare against your baseline, and choose with evidence. Use your own API keys, so prompts and evaluation data go only to providers you authorize.

1. Define Your Goal

Provide your evaluation dataset, your target LLM, and define what 'good' means for your use case.

2. Submit Your Prompt

Input the base prompt that you want to optimize.

3. Launch Optimization

Our engine automatically generates and tests hundreds of prompt variations.

4. Explore the Frontier

Watch the interactive Pareto chart evolve in real-time, explore the trade-offs, and spot evaluation data issues the optimizer surfaces along the way.

5. Select & Deploy

Click any point on the frontier to inspect the prompt and deploy the best-fit option for your use case.

100+ models, all major providers

Choose separate models for evaluation (the model you're optimizing for production) and meta operations (the model that generates prompt variations). These can be from different providers.

What a run can actually produce

A sample optimization run showing one baseline and multiple deployable trade-offs on the frontier.

Task

Entity matching

Evaluation

Quantitative, held-out validation

Dataset

120 labeled examples

Runtime

Standard run, about 8 minutes

Baseline

0.72

Current hand-tuned prompt

Best quality frontier point

0.91

Meaningfully higher quality, negligible cost increase

Best value frontier point

0.73

Near-baseline quality, 62% lower cost

The important point is not the exact numbers. It is that a single optimization run can surface more than one good answer: one prompt for maximum quality, another for bulk low-cost throughput, both measured against the same baseline.

Transform Guesswork into Guarantees

EigenPrompt is more than a text editor. It's a systematic optimization engine that helps you make confident trade-offs across cost, quality, and speed. If a run doesn't beat your baseline in at least one dimension, no credit is spent.

The EigenPrompt AdvantageDrastically Reduce LLM Costs

Stop over-provisioning on expensive models. Our multi-objective optimization finds the cheapest prompt configuration for your required accuracy.

- Systematically reduce LLM API costs

- Identify cost-effective model alternatives

- Get clear, quantifiable ROI on your AI spend

Maximize Accuracy & Reliability

Move beyond inconsistent outputs. Systematically minimize hallucination rates and improve response quality to build user trust and reduce risk.

- Quantify and reduce hallucination rates

- Deploy AI features with predictable performance

- Catch dataset errors that silently cap your performance

Ship AI Features Faster

Replace weeks of manual, trial-and-error tuning with a single, automated optimization run. Free your engineers to build, not tweak.

- Automate the prompt engineering lifecycle

- Go from idea to production-ready prompt in minutes

- Compare models with Model Showdown without spending optimization credits

- Empower your team to innovate faster

The Optimization Layer for Modern AI

We think prompt optimization deserves its own layer in the stack: a place to improve prompts systematically for cost, quality, and reliability before they reach production.

What kind of tasks work best?

EigenPrompt is designed for single, well-defined LLM tasks within a larger workflow — tasks where success is clearly measurable.

Best Fit

Measurable, scoped tasks

Classification, extraction, summarization, tool calling, and other tasks where you can define what success looks like and test it repeatedly against real examples.

Not A Fit Yet

Tasks without a stable eval signal

Vague creative work, fully open-ended agents, or multi-turn systems where one prompt does not capture the real behavior. In those cases, start by building a better evaluation harness.

| Task type | Evaluation approach | Why it works well |

|---|---|---|

| Entity extraction | Quantitative (exact/fuzzy) | Clear right answers, easy to measure |

| Classification / routing | Quantitative (exact match) | Discrete categories, objective scoring |

| Summarization | Qualitative (LLM judge) — coming soon | Quality is subjective but rankable |

| Information extraction | Quantitative (substring) | Structured outputs, verifiable |

| Tool calling | Quantitative (exact match) | Correct function + parameters or not |

| Content generation | Qualitative (judge + rubric) — coming soon | Define "good", let the judge score it |

Practical advice: If you are unsure where to start, pick the single prompt in your system with the clearest success criterion and optimize that first.

Frequently Asked Questions

Everything you need to know about EigenPrompt.

Still have questions? Contact us.

Ready to Move From Guesswork to Guarantee?

New account registration is temporarily paused while we expand capacity. Join the waitlist for reopening updates. Existing customers can still sign in and manage their subscription.