Better prompts exist. Find them automatically.

Manual Prompt Tuning

Does Not Scale.

You are trying to solve a multi-variable optimisation problem by hand. The result is bloated costs and fragile performance.

Inflated Operational Costs

Your LLM bill is spiraling, but you're afraid to switch to a cheaper model because you can't guarantee quality won't drop.

Wasted Developer Time

Engineers spend days manually tweaking prompts—time that could be spent building new, value-driving features for your customers.

Unreliable Applications

Inconsistent or hallucinated outputs from your RAG pipeline are eroding user trust and creating significant business risk.

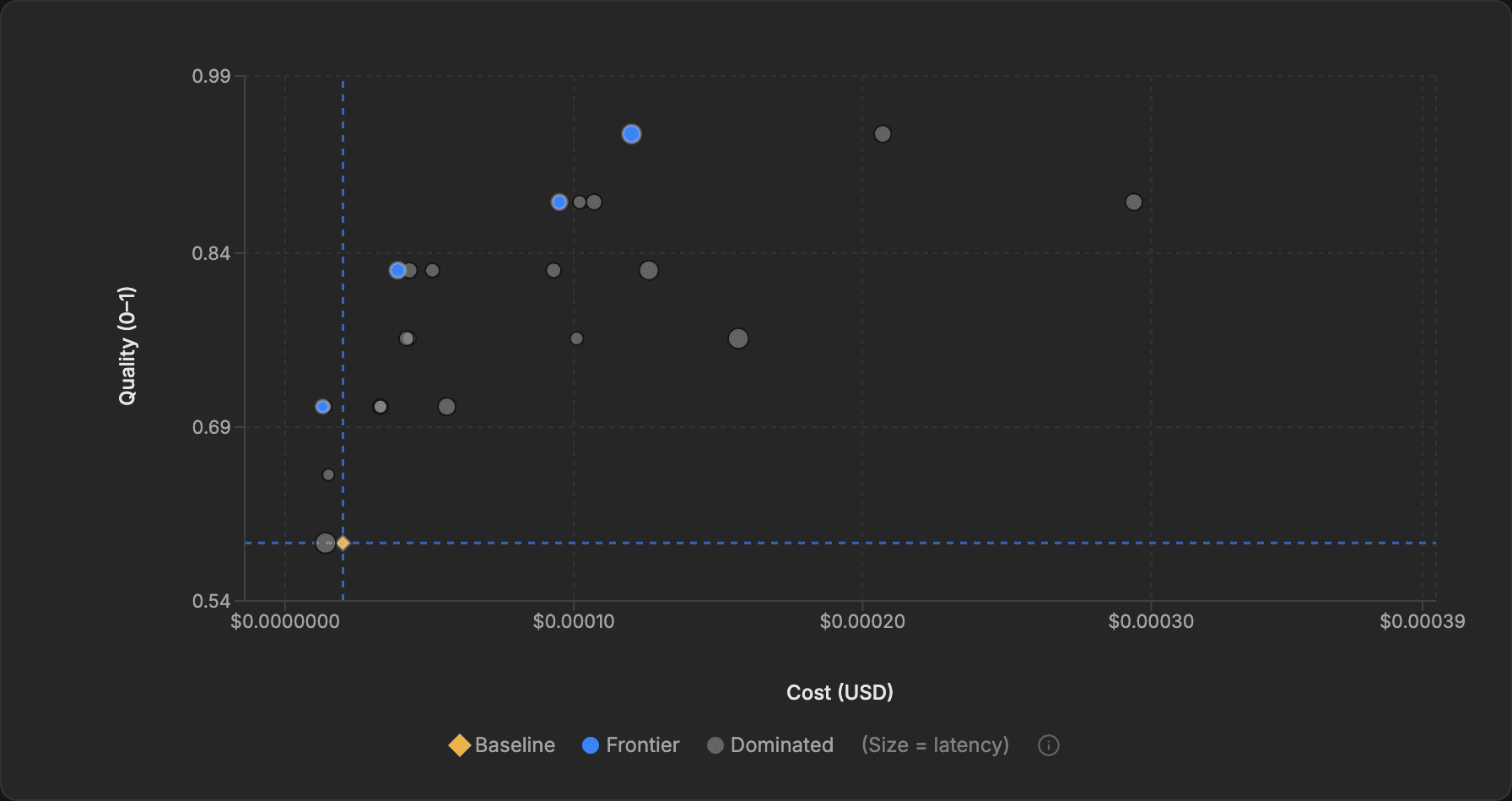

Visualize the Entire Trade-off Frontier

EigenPrompt introduces the Pareto frontier, a dynamic, real-time 2D visualization of your prompt optimization. Instantly see the trade-offs between cost and correctness, and select the perfect prompt with data-driven confidence.

1. Define Your Goal

Provide your evaluation dataset, your target LLM, and define what 'good' means for your use case.

2. Submit Your Prompt

Input the base prompt that you want to optimize.

3. Launch Optimization

Our engine automatically generates and tests hundreds of prompt variations.

4. Explore the Frontier

Watch the interactive Pareto chart evolve in real-time, explore the trade-offs, and spot evaluation data issues the optimizer surfaces along the way.

5. Select & Deploy

Click any point on the frontier to inspect the prompt and deploy the optimal one.

Transform Guesswork into Guarantees

EigenPrompt is more than a text editor. It's a systematic optimization engine that gives you the data to make confident decisions, balancing cost and quality like never before.

The EigenPrompt AdvantageDrastically Reduce LLM Costs

Stop over-provisioning on expensive models. Our multi-objective optimization finds the cheapest prompt configuration for your required accuracy.

- Systematically reduce LLM API costs

- Identify cost-effective model alternatives

- Get clear, quantifiable ROI on your AI spend

Maximize Accuracy & Reliability

Move beyond inconsistent outputs. Systematically minimize hallucination rates and improve response quality to build user trust and reduce risk.

- Quantify and reduce hallucination rates

- Deploy AI features with predictable performance

- Catch dataset errors that silently cap your performance

Ship AI Features Faster

Replace weeks of manual, trial-and-error tuning with a single, automated optimization run. Free your engineers to build, not tweak.

- Automate the prompt engineering lifecycle

- Go from idea to production-ready prompt in minutes

- Empower your team to innovate faster

The Optimization Layer for Modern AI

EigenPrompt is creating a new category - the Prompt Optimization Platform. We are the essential layer for building complex, cost-effective, and reliable prompts at scale, optimised for cost and accuracy.

Frequently Asked Questions

Everything you need to know about EigenPrompt.

Still have questions? Contact us.

Ready to Move From Guesswork to Guarantee?

Join the waitlist for early access to EigenPrompt and be the first to transform your prompt engineering workflow. Stop guessing, start optimizing.